Professor

Cristóbal Curio, Prof. Dr.-Ing.

Building 9

Room 227Phone +49 7121 271 4005

Cristóbal Curio, Prof. Dr.-Ing.

Building 9 , Room 227

Phone +49 7121 271 4005

Close

Since November 2014 I am full Professor for Cognitive Systems at the informatics department at Reutlingen University. I am associated with the Informatics Department at Eberhard-Karls University of Tübingen and am permanent guest scientist at Max Planck Institute for Biological Cybernetics where I have been heading the applied Cognitive Engineering group till 2013.

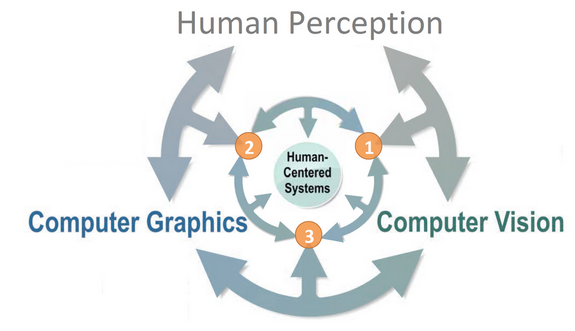

In industry I led projects in autonomous driving and human-machine interaction design. In my cognitive systems research group we develop and employ applications based on Computer-Vision, Computer-Graphics, Virtual-/Augmented Realities and Machine-Learning in combination with methods from applied human perception in order to optimize and test interfaces between emerging technologies and end-users. My main goal is to optimally combine human and machine intelligence (Human-Centered Artificial Intelligence).

Our research focuses on the question of how to build components towards robust human-centered computing. We study on an equal footing empirical experimental and computational approaches, combining both for new components for assistive purposes. The figure sketches the process of this research approach. New insights at the interface of different disciplines allow to move even closer to human-centered system functions. Most of our research is directly aiming towards a better understanding of human perception function and at the same time provides novel constraints for designing assistive systems. At the same time our research approach provides novel tools that support further research.

Samples of our latest research can be found at our publications page and project page. Our work addresses research questions and system design at different interfaces of classical research domains, as outlined below.

1 Interfacing Human Perception and Computer Vision

- Neurorobotics, Bild der Wissenschaft – Griff-Technik für die gelähmte Hand (www), Bild der Wissenschaft plus – „Jetzt ist morgen – Wie Forscher aus dem Südwesten die digitale Zukunft gestalten“ [pdf]

- Increasing Situation Awareness with new Detectability Concept

- Computational predictors of situation awareness

- Fusion of human and machine intelligence (Human-centered AI)

- Architectures for deep semantic scene understanding

2 Interfacing Human Perception and Computer Graphics

- 3D scanning for animation (faces, full bodies, objects)

- Perceptual real-time interactive 3D animation control

- Mechanisms of dynamic and emotional face perception

- Multimodal feedback systems for sensory augmentation and substitution

3 Interfacing Computer Graphics and Computer Vision

- Scene and sensor simulation models for Vision-System prototyping (Technology Review, AI learns to learn (In German))

- Motion-Capturing and simulation facilities [Video-Interview]

- Transfer-Learning [VAD KI-Leitinitiative, BMWi KI-DeltaLearning]

- Markerless pose estimation

- Deeo Generative and statistical shape modelling

- Affective computing and behavior understanding

- Learning-based deformable surface tracking

- Intelligent process automation in the area of Materiomics - Simulation, Optimization and Deep Learning (Interdisciplinary PhD School)

Unterwegs in die Zukunft, Autonomes Fahren [Schwäbisches Tagblatt, in German]

Das Auto erkennt Gesten und Grimassen [Re:search Magazin , p. 9, in German]

Handshake mit dem Avatar [Camplus Magazin, 2019 (Reutlingen University), in German]

| PEER REVIEWED JOURNAL ARTICELS |

de la Rosa S., Fademrecht L., Bülthoff H.H., Giese M.A., Curio C. (2018) Two ways to facial expression recognition? Motor and visual information have different effects on facial expression recognition, Journal of Psychological Science. Volume: 29 issue: 8, page(s): 1257-1269.

Chiovetto E., Curio C., Endres D., Giese M. (2018) Perceptual integration of kinematic components in the recognition of emotional facial expressions, Journal of Vision; 18(4): p. 1-19. ISSN: 1534-7362.

Dobs, K., Bülthoff, I., Breidt, M., Vuong, Q., Curio, C., Schultz, J. (2014) Quantifying human sensitivity to spatio-temporal information in dynamic faces. Vision Research 100, pp. 78 – 87.

| PEER REVIEWED CONFERENCE ARTICELS |

Ludl D., Gulde T., Curio C. (2019) Simple yet efficient real-time pose-based action recognition, 22nd IEEE International Conference on Intelligent Transportation Systems (ITSC), October 27-30.

Gulde T., Ludl D., Andrejtschik J., Thalji S., Curio C. (2019) RoPose-Real: Real World Dataset Acquisition for Data-Driven Industrial Robot Arm Pose Estimation, IEEE International Conference on Robotics and Automation (ICRA 2019), May 20-24, Montreal, pp 1-8.

Ludl D., Gulde T., Thalji S., Curio C. (2018) Using simulation to improve human pose estimation for corner cases, 21st IEEE International Conference on Intelligent Transportation Systems (ITSC), pp. 3575-3582. (Runner-Up Best Paper Award)

Baulig G., Gulde T., Curio C. (2019) Adapting Egocentric Visual Hand Pose Estimation Towards a Robot-Controlled Exoskeleton. In: Leal-Taixé L., Roth S. (eds) European Conference on Computer Vision, 2018 Workshops. ECCV 2018. Lecture Notes in Computer Science, vol 11134. Springer

Gulde T., Ludl D., Curio C. (2018) RoPose: CNN-Based 2D Pose Estimation of Industrial Robots, 14th IEEE Conference on Automation Science and Engineering (CASE), Munich, August, pp. 463-470.

Gulde T., Kärcher S., Curio C (2016), Vision-Based SLAM Navigation for Vibro-Tactile Human-Centered Indoor Guidance. In: Hua G., Jégou H. (eds) Computer Vision – ECCV 2016 Workshops. ECCV 2016, Lecture Notes in Computer Science, vol 9914.

Schuster F., Zhang W., Keller C.G., Haueis M., Curio C. (2017) Joint graph optimization towards crowd based mapping, IEEE 20th International Conference on Intelligent Transportation Systems (ITSC), 2017, pp. 1-6.

Breidt M., Bülthoff H.H., Curio C. (2016) Accurate 3D head pose estimation under real-world driving conditions: A pilot study, 2016 IEEE 19th International Conference on Intelligent Transportation Systems (ITSC), 2016, pp. 1261-1268.